Who Controls Intelligence?

Horizon #10: Infrastructure, full-stack control, and the hidden risks behind the AI boom

Who is winning the AI race and what does “winning” even mean?

Most answers still focus on models, benchmarks, product launches. Which lab shipped the most capable system this quarter. But this framing increasingly obscures what actually determines power in AI.

As artificial intelligence moves from experimentation to mass deployment, leadership is being shaped less by algorithmic breakthroughs and more by something far less visible: infrastructure. Compute capacity. Energy access. Capital intensity. The ability to finance and operate intelligence at industrial scale.

In other words, AI is no longer behaving like a typical software revolution. It is starting to look like an infrastructure business, with all the concentration, advantages, and risks that implies.

This raises a more uncomfortable question: will the winners of AI be the companies with the smartest models or the ones that control the power, capital, and full stack required to run intelligence at scale?

Before we begin, if you’re not yet subscribed, here’s where to do it 👇

You can also follow me and get in touch on my personal media:

Linkedin · X · ipotolot@gmail.com

AI has stopped behaving like software

For most of the past two decades, technology leadership followed a familiar pattern. Software scaled cheaply. Marginal costs approached zero. Infrastructure faded into the background. Distribution and network effects determined outcomes.

Generative AI breaks this logic.

Running AI systems has a real, recurring marginal cost. Every inference consumes compute. Compute consumes electricity. Electricity requires land, grids, permits, cooling, and long-term contracts. These constraints do not disappear with better code.

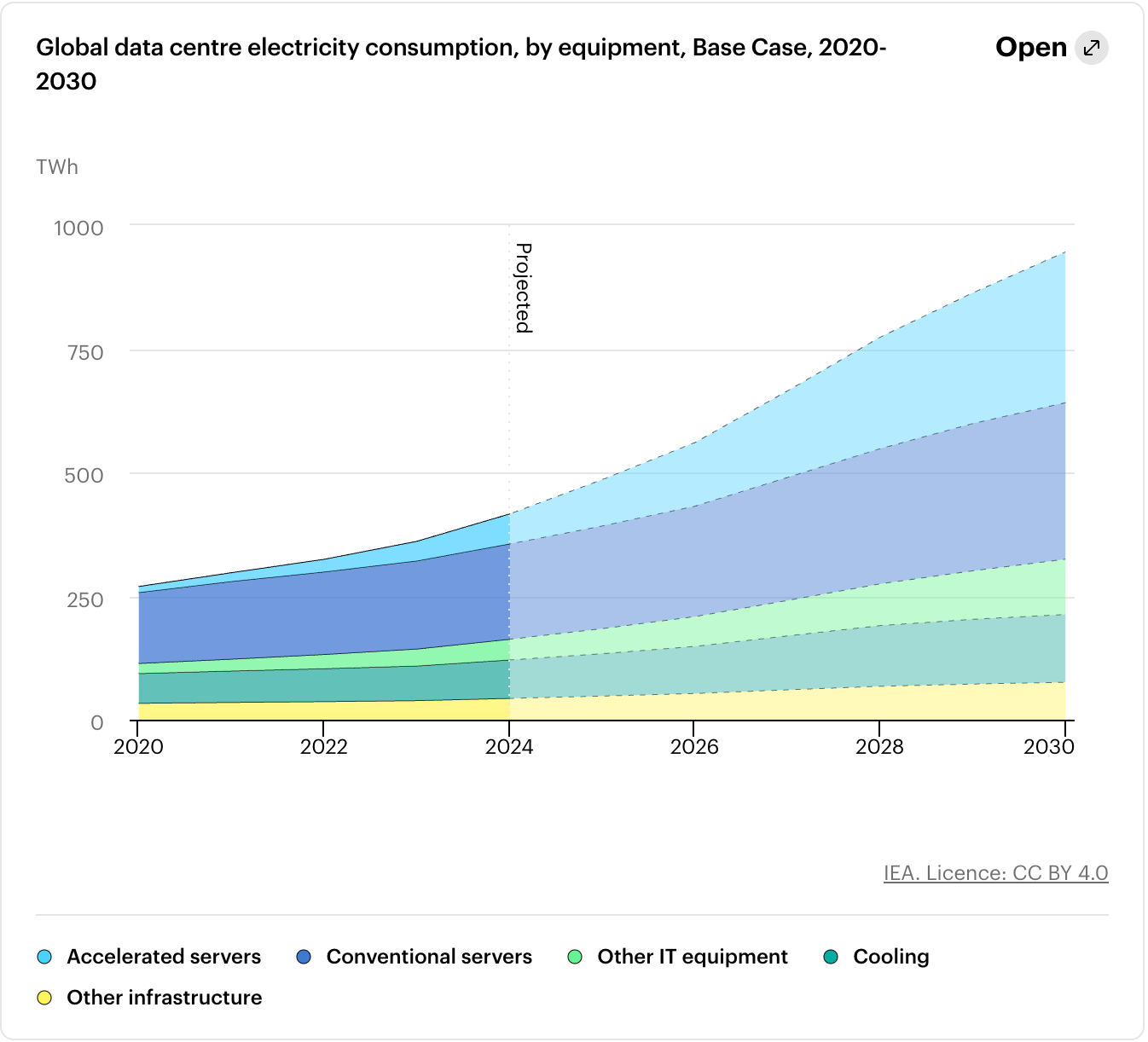

The International Energy Agency estimates that global data center electricity consumption was ~415 TWh in 2024, roughly 1.5 % of global electricity use and is on track to double by 2030 as AI workloads expand.

Source: IEA

Software once scaled primarily on bandwidth and distribution. AI now scales on electricity and silicon.

This shift changes what an AI platform means. The platform is no longer just an API, a model, or a developer ecosystem. It is the entire system that converts electricity into usable intelligence.

The AI stack: where that power actually sits

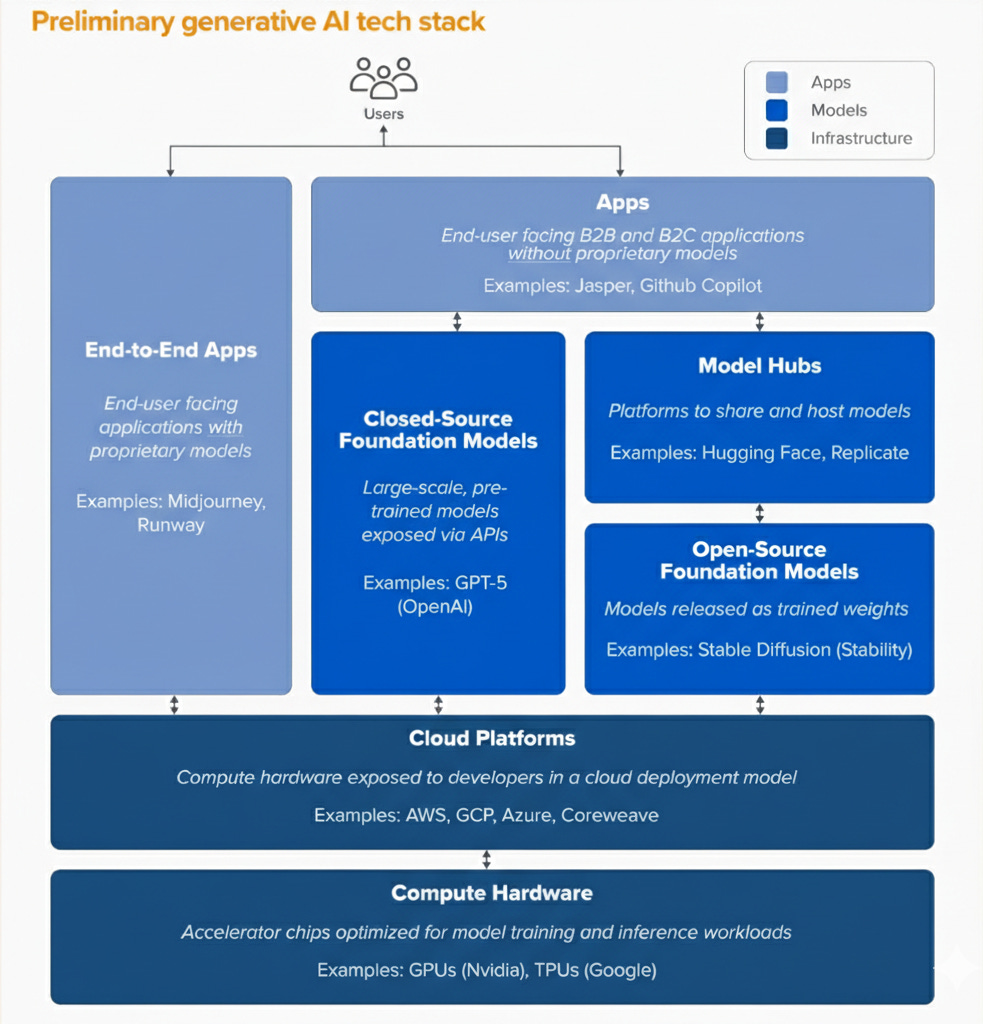

To understand how this translates into competitive advantage, AI must be understood not as a product, but as a stack.

A simplified view looks like this:

Source: a16z

Each layer depends on the one below it.

Applications depend on models. Models depend on serving capacity. Serving depends on infrastructure, including data centers, chips, power, and land.

Dependency is not symmetric. Every layer relies on infrastructure for execution, but infrastructure remains more durable and less easily substituted than the layers above it. This asymmetry helps explain why revenue, capital intensity, and strategic leverage are currently concentrated at the infrastructure and cloud layers.

This dynamic is already visible in revenue distribution.

Nvidia’s data center revenue more than tripled year over year during the peak of the AI build-out phase, rising from roughly $15 billion to over $47 billion in fiscal year 2024. Hyperscalers, meaning large cloud platform operators such as Amazon, Microsoft, and Alphabet, have similarly tied cloud growth to AI demand. Microsoft’s Intelligent Cloud segment generated approximately $96 billion in FY2024 revenue, AWS produced roughly $90 billion, and Google Cloud surpassed $33 billion, with all three highlighting AI workloads as a meaningful driver of incremental growth.

Leading model-layer companies have also scaled rapidly and now generate multi-billion-dollar revenues ($20 billion for OpenAI, $10billion for Anthropic in 2025). However, aggregate infrastructure and cloud revenues remain substantially larger, and capital expenditures at the hyperscaler level exceed even the fastest-growing model companies.

Pure application-layer startups such as Gamma, Lovable, or Harvey are growing rapidly but generally operate in the hundreds of millions of dollars in annual recurring revenue.

At this stage of the cycle, the center of gravity in realized AI revenue remains concentrated in infrastructure and cloud layers, even as model-layer revenues accelerate.

Where capital meets constraint

The scale of current investment makes this unmistakable.

Over the next year alone, Amazon, Alphabet, Microsoft, and Meta Platforms are expected to deploy over $650 billion in capital expenditures, with an estimated 70–75 % directed toward AI-related infrastructure.

Concrete numbers make the point clearer:

Amazon is projected to spend close to $200 billion in CapEx, largely driven by AWS data centers and AI compute.

Alphabet (Google) has guided toward roughly $175–185 billion, heavily weighted toward AI infrastructure, TPUs, and facilities.

Microsoft is expected to invest $110–120 billion, tied to Azure expansion and AI deployment.

Meta has signaled $115–135 billion, primarily for AI training clusters and inference capacity.

For context, total U.S. highway spending averages roughly $200 billion annually, while U.S. electric utilities invest around $180 billion per year in capital expenditures. Hyperscaler AI CapEx now exceeds both, placing it on the scale of traditional industrial infrastructure sectors.

Traditional software companies optimized for asset-light scalability and high margins. Hyperscalers are now optimizing for long-lived physical capacity.

Infrastructure has shifted from background support to primary constraint.

Ownership vs. dependency: the first real divide

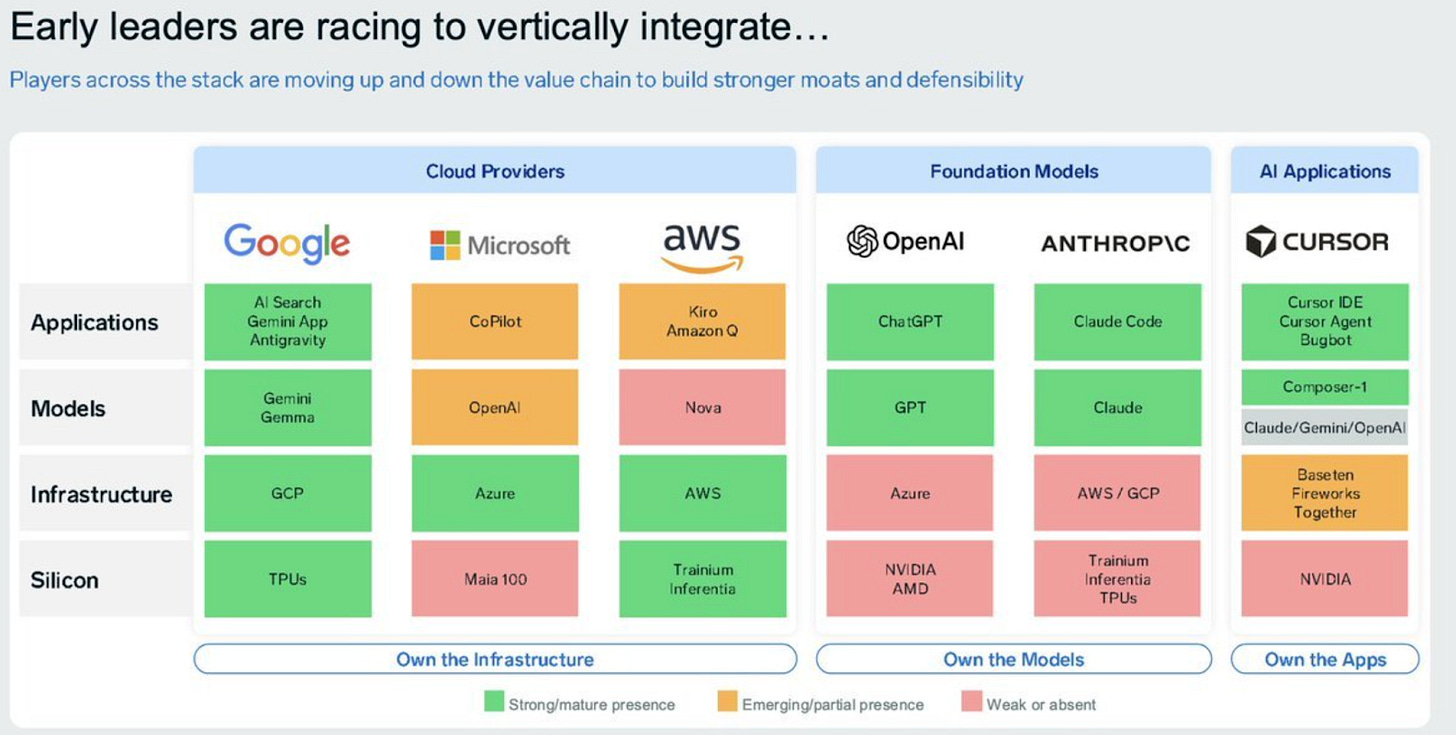

At this point, model quality alone is no longer sufficient to assess long-term positioning. What matters is who controls how much of the stack.

Source: Rohan Paul

Google remains the clearest example of vertical integration. It owns custom silicon through TPUs, hyperscale global data centers, private high-performance networking, frontier models, and first-party distribution across Search, Android, YouTube, Chrome, and Workspace. This integration allows it to influence cost curves, deployment prioritization, and internal allocation of compute resources.

Other leading AI labs operate under a different structure.

OpenAI is deeply intertwined with Microsoft, which owns 32.5% of OpenAI and provides the underlying cloud, compute, and distribution layer via Azure. OpenAI supplies the models, Microsoft controls the physical base.

A similar pattern appears with Anthropic and Amazon. Anthropic develops advanced models. Amazon provides cloud infrastructure, chips, and physical scale through AWS.

These partnerships are rational responses to capital intensity. However, they create structural dependency. Model labs rely on hyperscalers for compute access, while hyperscalers ultimately control allocation decisions and infrastructure investment pace.

The hidden risk behind the infrastructure advantage

If infrastructure control creates competitive advantage, it also introduces structural risk.

Hyperscalers are committing hundreds of billions of dollars in capital expenditures before long-term demand curves are fully validated. AI usage is expanding rapidly across consumer and enterprise markets, and large platforms are embedding generative AI into core products. However, monetization remains uneven across the stack.

Revenue concentration is visible. Infrastructure providers and cloud platforms capture a disproportionate share of realized AI revenue, while many enterprises remain in experimentation phases with uncertain timelines for measurable productivity gains or revenue expansion.

Enterprise adoption intent is strong, but realized economic returns are more uneven. McKinsey’s 2025 State of AI survey reports that roughly 65 percent of organizations use AI in at least one business function, and around half deploy generative AI tools. Yet only a minority report material operating profit contribution from these initiatives so far.

This imbalance between infrastructure expansion and downstream profitability creates the conditions for potential capital overshoot. When multiple firms expand capacity simultaneously to avoid supply constraints in a competitive cycle, aggregate compute supply can grow faster than durable, profit-generating demand capable of supporting long-term infrastructure returns. Similar dynamics appeared during the telecom fiber buildout of the late 1990s and in cyclical semiconductor expansions, where capacity expanded ahead of sustained demand realization.

Each firm’s decision may be rational in isolation. Collectively, synchronized expansion increases the risk of excess capacity if demand growth moderates or efficiency gains reduce compute intensity per task.

Financing behavior reinforces the long-horizon nature of these bets. In February 2026, Alphabet announced plans to issue a 100-year bond, an instrument typically associated with utilities, railroads, and sovereign-scale infrastructure, not fast-moving software companies. The message is explicit: AI infrastructure is being financed on multi-decade horizons, with long-term demand assumptions baked in.

Energy constraints add another layer of fragility. Data centers depend on electricity grids that were not designed for this level of concentrated demand. Permitting, transmission upgrades, and grid interconnections take years, not quarters. Capital can move quickly, power cannot.

As AI capacity concentrates among a small number of actors, efficiency increases but resilience declines. Outages matter more. Policy decisions matter more. Geopolitical tensions matter more. Smaller players face rising barriers to entry.

The same infrastructure that creates dominance also creates single points of failure.

Conclusion: Winning AI may mean surviving its own success

So, who controls intelligence?

In the short term, leadership belongs to those delivering the most capable models and fastest deployments. In the longer term, advantage appears to shift toward those able to finance, own, and operate the full AI stack, from silicon and data centers to models and distribution.

Full-stack ownership provides cost control, allocation flexibility, and strategic optionality. Deep partnerships provide scale but embed dependency.

The firms best positioned to dominate AI infrastructure are also those most exposed if demand growth slows or capital cycles tighten.

AI is becoming an industrial system shaped by capital allocation discipline, energy availability, and macroeconomic cycles as much as by model innovation.

The defining question of the next phase is not simply who will win AI. It is whether the current infrastructure expansion proves economically sustainable, or whether it lays the groundwork for the next overbuild cycle.

The answer will be found in balance sheets, power grids, and capital discipline.

Idriss 🌞